In this last part of my blog series I will talk about what has recently become my favourite pastime while traveling by train: fixing the transcriptions of the focus group sessions. The group interviews I wrote about last time are automatically transcribed, but those transcriptions contain mistakes that can only be fixed by human ears. Meaning is context dependent, so a word that’s transcribed wrong can often easily be reconstructed if it’s taken in relation to the topic at hand, even if the exact pronunciation might be unclear.

For the sake of confidentiality, I will have to leave the context of the mistakes vague. The examples have been selected to highlight the issues I have, while staying clear without giving too much context. As a last disclaimer I want to mention that although it is fun to nitpick these tiny mistakes, the software transcribed hundreds of thousands of words correctly, which would be a full-time job on its own. Instead, it only requires comparing the transcript with the recording to clean up small errors.

To start off with a “funny” one, my name has been transcribed wrong as “Philippe von Dijk” in nearly every session. While this is a funny mistake at first glance, that’s not my name, the implications are quite severe. My name is located in the consent form, so if the participants consent to this Philippe to see the transcriptions, I would still be excluded. Additionally, the algorithm responsible fails to see me for who I am. Let’s say I don’t trust the machines to handle my taxes just yet. Since the participants are pseudo-anonymised by being given a number (which brings its own problematics with it, but that’s a different subject), this luckily wasn’t an issue for anyone besides the researchers.

The next errors are hypothetically related to the training data of the transcription software. Because companies aren’t open about the way their models are trained, I am careful not to draw conclusions that go too far. On the website of Amberscript, which we used to transcribe an in-person focus group, the CEO explains that they “collected terabytes of data on the way to get to such a high quality level” of “machine-made transcription.” To me, this implies that the company used their own transcriptions of recordings and meetings they provided to customers in order to train their model. That is the shaky interpretative step, but if you’re willing to go along with that there might be insight to be gained. The industries that the company caters to are “Universities/education”, “Governments” and “Legal” ones. I hope that they didn’t use confidential research and legal data to train their algorithm, in order to comply with the law, so that would leave the transcription and recording of lectures and public meetings. Even so, any of these settings could be considered “formal.”

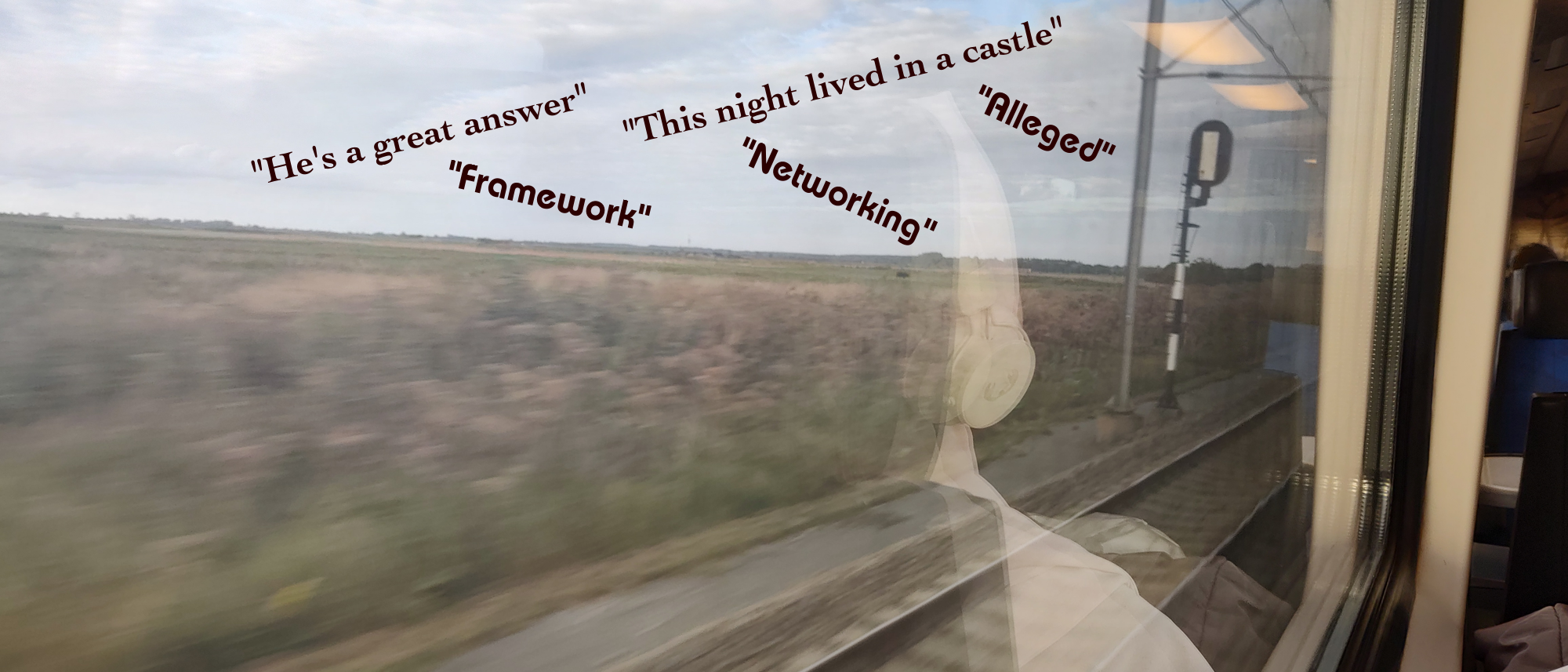

I hypothesize this to explain the transcription mistakes, which replace less formal words with words found in these other types of speech. As mentioned in another blog, the focus groups take the shape of conversational interviews. The software transcribed “trailer,” when talking about the marketing of media, with “framework.” While these words couldn’t look more dissimilar, pronouncing the words helps to understand the confusion. The word “framework” would fit quite well in a lecture on media analysis, but doesn’t make sense in this specific conversation.

It also put down “networking” instead of “nitpicking.” Where networking is a job market and industry related term, nitpicking is an informal way to point to detail-oriented critiques that can easily be disregarded instead. While nitpicking seems irrelevant by definition, when participants start referring to their problems with a game as such it benefits the research to delve deeper. These are finer details that would otherwise go unmentioned, and the use of this word shows that the participant approaches the topic self-reflexively. While the transcription software prides itself on its ability to “transcribe audio with foreign accents,” problems arise when the discussion becomes less formal. Although the data used contains “a sufficient sample size for both genders, as well as different accents and tones of voice,” these mistakes point to a possible blind spot related to vocabulary.

Lastly, there were some mistakes that I couldn’t place. These errors don’t fit the word groups or the contexts they are a part of, and do not point to the way Amberscript explains its “speech to text linguistic model.” Although they clarify it has “varied success,” this model is supposed to use the context of sentences to correct grammar by filling in unpronounced letters. Since this automated linguistic process is context bound, it surprised me to see the software transcribe “this night lived in a castle.” Of course, there is a silent “k” missing from the sentence, and Amberscript did transcribe the word correctly in the sentence after, but it is pretty clear which word that is pronounced like “night” would live in such a building. Why it made this error is beyond me.

And that is where I will leave this blog series: on a note of not-knowing. Because although I have learnt a lot about quantitative- and social science, there is a lot left to discover. While I feel like I have a grasp on the tools used in this discipline, this mistranscription shows how noticing errors doesn’t always result in understanding why it made the error. I hope these blogs show how reflecting on your own development in an academic field can be fun. I also hope that this last post, while technical, shows that the analysis of mistakes (even if they’re not your own) can result in potential insights or hypotheses for further research.